RGTranCNet: Effective image captioning model using cross-attention and semantic knowledge

Author affiliations

DOI:

https://doi.org/10.15625/2525-2518/22381Keywords:

Image captioning, cross-attention mechanism, transformer, ConceptNet knowledge base, relationship graphAbstract

Generating captions for images is a key endeavour that connects visual processing and linguistic analysis. However, techniques relying on long short-term memory (LSTM) units and conventional attention systems face restrictions in managing intricate interconnections and supporting effective parallel processing. Additionally, precisely depicting elements absent from the training data presents a significant challenge. To overcome these obstacles, the present research introduces an innovative framework for image description, employing a Transformer architecture augmented by cross-attention processes and semantic insights sourced from ConceptNet. This setup follows an encoder-decoder paradigm, where the encoder derives features from object areas and assembles a graph of associations to depict the visual scene. At the same time, the decoder merges visual and semantic aspects through cross-attention to produce captions that are both accurate and varied. The inclusion of ConceptNet-derived knowledge enhances precision, particularly when handling items not encountered during training. Tests conducted on the standard MS COCO dataset reveal that this approach outperforms recent state-of-the-art approaches. Moreover, the semantic integration strategy outlined here can be readily adapted to alternative image captioning systems.

Downloads

References

1. Jamil A., Saif Ur R., Mahmood K., Villar M. G., Prola T., Diez I. D. L. T., Samad M. A., Ashraf I. - Deep Learning Approaches for Image Captioning: Opportunities, Challenges and Future Potential. IEEE Access, 12 (2025) 1-1. https://doi.org/10.1109/access.2024.3365528.

2. Verma A., Yadav A. K., Kumar M., Yadav D. - Automatic image caption generation using deep learning. Multimed. Tools Appl., 83 (2023) 5309-5325. https://doi.org/10.1007/s11042-023-15555-y.

3. Kavitha R. - E3S Web of Conferences, EDP Sciences, (2023) 04005. https://doi.org/10.1051/e3sconf/202339904005.

4. Pavlopoulos J., Kougia V., Androutsopoulos I. - Proceedings of the Second Workshop on Shortcomings in Vision and Language, Association for Computational Linguistics, (2019) 26-36. https://doi.org/10.18653/v1/W19-1803.

5. Szafir D., Szafir D. A. - Proceedings of the 2021 ACM/IEEE International Conference on Human-Robot Interaction, Association for Computing Machinery, (2021) 281-292. https://doi.org/10.1145/3434073.3444683.

6. Lin Y.-J., Tseng C.-S., Hung Y.-K. - Relation-Aware Image Captioning with Hybrid-Attention for Explainable Visual Question Answering. J. Inf. Sci. Eng., 40(3) (2024) 479-494.

7. Vinyals O., Toshev A., Bengio S., Erhan D. - Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, IEEE, (2015) 3156-3164. https://doi.org/10.1109/CVPR.2015.7298935.

8. Huang L., Wang W., Chen J., Wei X.-Y. - Proceedings of the IEEE/CVF International Conference on Computer Vision, IEEE, (2019) 4634-4643. https://doi.org/10.1109/ICCV.2019.00473.

9. Xu K., Ba J., Kiros R., Cho K., Courville A., Salakhutdinov R., Zemel R., Bengio Y. - Proceedings of the 32nd International Conference on Machine Learning, PMLR, (2015) 2048-2057. https://doi.org/10.48550/arXiv.1502.03044.

10. Thinh N. V., Lang T. V., Thanh V. T. - The 16th National Conference on Fundamental and Applied IT Research (FAIR'2023), Natural Science and Technology Publishing House, (2023) 395-404. https://doi.org/10.15625/vap.2023.0063.

11. Anderson P., He X., Buehler C., Teney D., Johnson M., Gould S., Zhang L. - Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, IEEE, (2018) 6077-6086. https://doi.org/10.1109/CVPR.2018.00636.

12. Nguyen Van T., Lang T. V., Van V. T. T. - OD-VR-Cap: Image captioning based on detecting and predicting relationships between objects. J. Comput. Sci. Cybern., 40(4) (2024) 327-346. https://doi.org/10.15625/1813-9663/20929.

13. Xu N., Liu A.-A., Liu J., Nie W., Su Y. - Scene graph captioner: Image captioning based on structural visual representation. J. Vis. Commun. Image Represent., 58 (2019) 477-485. https://doi.org/10.1016/j.jvcir.2018.12.027.

14. Thinh N. V., Lang T. V., Thanh V. T. - The 15th National Conference on Fundamental and Applied IT Research (FAIR'2022), Natural Science and Technology Publishing House, (2022) 431-439.

15. Li Z., Wei J., Huang F., Ma H. - Modeling graph-structured contexts for image captioning. Image Vis. Comput., 129 (2023) 104591. https://doi.org/10.1016/j.imavis.2022.104591.

16. Vaswani A., Shazeer N., Parmar N., Uszkoreit J., Jones L., Gomez A. N., Kaiser Ł., Polosukhin I. - Advances in Neural Information Processing Systems, Neural Information Processing Systems Foundation, (2017) 5998-6008. https://doi.org/10.48550/arXiv.1706.03762.

17. Hendricks L. A., Venugopalan S., Rohrbach M., Mooney R., Saenko K., Darrell T. - Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, IEEE, (2016) 1-10. https://doi.org/10.1109/CVPR.2016.8.

18. Zhou Y., Sun Y., Honavar V. G. - IEEE Winter Conference on Applications of Computer Vision (WACV 2019), IEEE, (2019) 283-293. https://doi.org/10.1109/WACV.2019.00036.

19. Hafeth D. A., Kollias S., Ghafoor M. - Semantic Representations With Attention Networks for Boosting Image Captioning. IEEE Access, 11 (2023) 40230-40239. https://doi.org/10.1109/access.2023.3268744.

20. Patwari N., Naik D. - 2021 5th International Conference on Computing Methodologies and Communication (ICCMC), IEEE, (2021) 1206-1211. https://doi.org/10.1109/ICCMC51019.2021.9418414.

21. Xie T., Ding W., Zhang J., Wan X., Wang J. - Bi-LS-AttM: A Bidirectional LSTM and Attention Mechanism Model for Improving Image Captioning. Appl. Sci., 13(13) (2023) 7916. https://doi.org/10.3390/app13137916.

22. Chen S., Jin Q., Wang P., Wu Q. - Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, IEEE, (2020) 9959-9968. https://doi.org/10.1109/CVPR42600.2020.00998.

23. Yan J., Xie Y., Luan X., Guo Y., Gong Q., Feng S. - Caption TLSTMs: combining transformer with LSTMs for image captioning. Int. J. Multimed. Inf. Retr., 11(2) (2022) 111-121. https://doi.org/10.1007/s13735-022-00228-7.

24. Ramos L., Casas E., Romero C., Rivas-Echeverría F., Morocho-Cayamcela M. E. - A Study of ConvNeXt Architectures for Enhanced Image Captioning. IEEE Access, 12 (2024) 13711-13728. https://doi.org/10.1109/access.2024.3356551.

25. Wang Y., Xu J., Sun Y. - Proceedings of the AAAI Conference on Artificial Intelligence, Association for the Advancement of Artificial Intelligence, (2022) 2585-2594. https://doi.org/10.1609/aaai.v36i3.20160.

26. Yang X., Wang Y., Chen H., Li J., Huang T. - Context-aware transformer for image captioning. Neurocomputing, 549 (2023) 126440. https://doi.org/10.1016/j.neucom.2023.126440.

27. Li Z., Su Q., Chen T. - External knowledge-assisted Transformer for image captioning. Image Vis. Comput., 140 (2023) 104864. https://doi.org/10.1016/j.imavis.2023.104864.

28. Hamilton W. L., Ying Z., Leskovec J. - Advances in Neural Information Processing Systems, Neural Information Processing Systems Foundation, (2017) 1024-1034. https://doi.org/10.48550/arXiv.1706.02216.

29. Speer R., Chin J., Havasi C. - Proceedings of the AAAI Conference on Artificial Intelligence, Association for the Advancement of Artificial Intelligence, (2017) 4444-4451. https://doi.org/10.1609/aaai.v31i1.11164.

30. Lin T.-Y., Maire M., Belongie S., Hays J., Perona P., Ramanan D., Dollár P., Zitnick C. L. - European Conference on Computer Vision, Springer, (2014) 740-755. https://doi.org/10.1007/978-3-319-10602-1_48.

31. Karpathy A., Fei-Fei L. - Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, IEEE, (2015) 3128-3137. https://doi.org/10.1109/CVPR.2015.7298932.

32. Papineni K., Roukos S., Ward T., Zhu W.-J. - Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics, Association for Computational Linguistics, (2002) 311-318. https://doi.org/10.3115/1073083.1073135.

33. Banerjee S., Lavie A. - Proceedings of the ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization, Association for Computational Linguistics, (2005) 65-72.

34. Lin C.-Y. - Proceedings of the ACL-04 Workshop, Association for Computational Linguistics, (2004) 74-81.

35. Vedantam R., Lawrence Zitnick C., Parikh D. - Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, IEEE, (2015) 4566-4575. https://doi.org/10.1109/CVPR.2015.7299087.

Downloads

Published

How to Cite

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

Vietnam Journal of Sciences and Technology (VJST) is an open access and peer-reviewed journal. All academic publications could be made free to read and downloaded for everyone. In addition, articles are published under term of the Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA) Licence which permits use, distribution and reproduction in any medium, provided the original work is properly cited & ShareAlike terms followed.

Copyright on any research article published in VJST is retained by the respective author(s), without restrictions. Authors grant VAST Journals System a license to publish the article and identify itself as the original publisher. Upon author(s) by giving permission to VJST either via VJST journal portal or other channel to publish their research work in VJST agrees to all the terms and conditions of https://creativecommons.org/licenses/by-sa/4.0/ License and terms & condition set by VJST.

Authors have the responsibility of to secure all necessary copyright permissions for the use of 3rd-party materials in their manuscript.

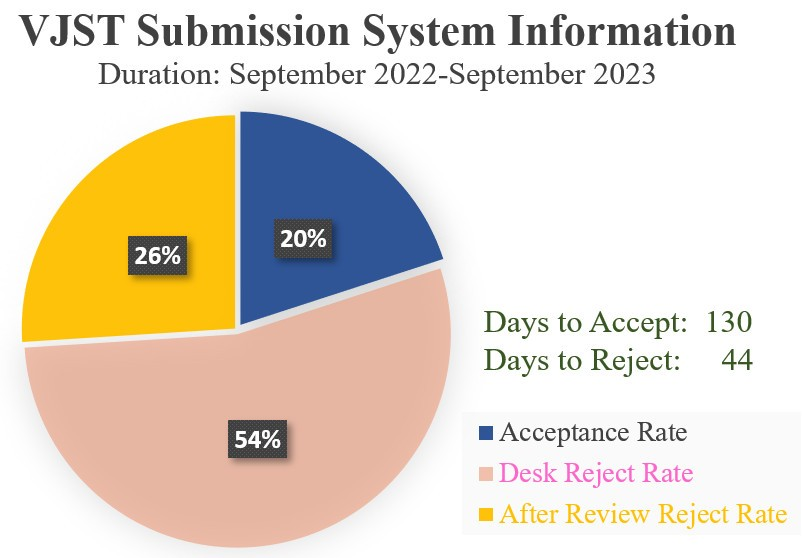

Vietnam Journal of Science and Technology (VJST) is pleased to notice:

Vietnam Journal of Science and Technology (VJST) is pleased to notice: